Have you ever encountered content that was “not available in your region” while surfing the web?

Have you ever wanted to watch one of the shows that Netflix has available in another country?

I bet you have, especially if you live outside the United States of America. The solution to this issue is easy enough: you can use a proxy server or VPN service. But there are two issues with that approach:

- All your internet traffic is going through the VPN. If this can result in very notable delays when surfing your normal websites.

- You usually have no insight into what kind of logging you VPN provider does. So you really shouldn’t do any sensitive stuff over that connection.

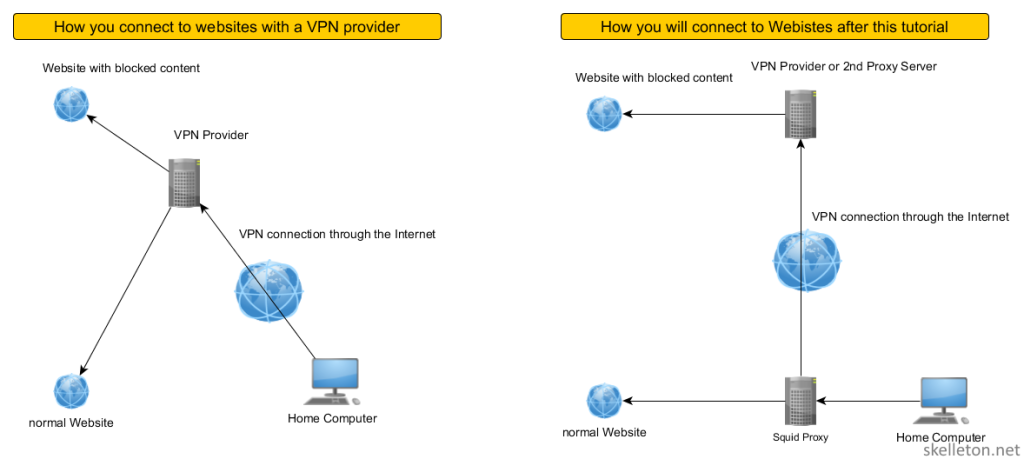

Ideally you would want all of your normal surfing to go out through your normal internet connection and all the region specific stuff through a VPN or some other proxy.

And you can actually build something to do this with Squid. Squid is an Open Source proxy server.

A proxy Server sits between your browser and the websites you want to surf to. It accepts all your requests to surf to certain websites and processes them according to its configuration. Once it has determined that the request is valid, it will contact the web server for you and fetch the content you want. It will then forward it to your browser.

Since it sits in the middle of your traffic, it is the perfect place to redirect some traffic through another connection.

This diagram visualizes the difference between the two options for you:

And this is really just the start of your capabilities of Squid. While this tutorial will only show you a few basics of squid and how you can redirect some content over a VPN or another Squid server, there is so much more that can be done with Squid:

- Are you on a connection with a fairly low Volume available (like some mobile contracts)?

No problem! Just crank up the caching in squid and repeated visits of the same website won’t be as demanding on your volume. - Have kids that that visit bad websites?

No problem! You can use squid to filter the internet by pretty much any criteria your want. And you can do it on a per user or computer basis if you need to. - You hate ads on websites, but maintaining you Ad-Blockers across all devices is annoying?

No worries! You can use squid as your Ad-Filter.

If you want to quick jump to the meaty parts here is an index:

- Get started with Squid

- Basic principles of Squid configuration

- Write your personal Squid proxy configuration from scratch

- Two ways to avoid geoblocking with Squid

- Why stop here?

Get started with Squid

This tutorial is based on Debian Linux, but Squid is available for many other operating systems and the Squid configuration will be largely the same on those.

The important thing is that you already have a computer or a virtual machine on which you can run Squid.

So let’s get started!

To install Squid on Debian Jessie, simply run the following command:

apt-get install squid3

Once Squid is installed, you need to configure Squid. The default Squid configuration on Debian is very well documented.

This is great, because you can read up on a lot of things and pretty much any option in there explained in detail.

It’s also annoying because its extremely long and it’s hard to get an overview of the running configuration by just looking at the squid.conf.

In this tutorial you will learn to write you squid config from scratch, but is a good idea to keep the original configuration around for reference:

cp /etc/squid3(squid.conf /etc/squid3/squid.conf.bk touch echo '' > /etc/squid3/squid.conf

Basic principles of Squid configuration

Before you start writing your own squid configuration, you might want to learn some of the basics. This will enable you to write your own policies for squid later on.

A comprehensive introduction can be found in the official Squid documentation. But if you just need an overview for now, read on.

In Squid you write rules with Access Control Lists(acl). There are two parts to acls:

- acl elements: define who or what is affected.

- access lists: define how an element is affected. They consist of an allow or deny and one or more acl element(s).

If you combine those two you create acl rules. For example to allow all traffic from your local network to access the internet you could write an src acl element and allow it to access the http_access access list:

acl local_network src 192.168.1.0/24 http_access allow local_network

The first line contains an element. Elements always follow this syntax:

- acl: to let Squid know you are defining an acl element:

- unique name: You can define this name freely.

- acl type: The available types are predefined and dependent on the squid version you are using. A list of acl types can be found in the official documentation.

- value list: a list of values that you want this acl element to match. The required syntax for a correct value depends on the acl type used. If you list more than one value here, the list will be evaluated with a logical OR (If one condition is met the entire element will be evaluated as true).

That’s it already. If you have a long list of values for one element you can also split them across multiple lines. Multiple definitions of an acl element are cumulative.

This means that this configuration:

acl local_network src 192.168.1.0/24 192.168.2.0/24

And this configuration:

acl local_network src 192.168.1.0/24 acl local_network src 192.168.2.0/24

will create a local_network acl element with exactly the same behavior in the end.

It is however not permitted to combine different acl types in this way.

This will lead to an syntax error:

acl local_network src 192.168.1.0/24 acl local_network dst 10.0.0.0/24

Back to the original example:

acl local_network src 192.168.1.0/24 http_access allow local_network

The second line the example is the actual access list. In this case the “http_access” access list. Access lists have the following Syntax:

- Access List: The available access lists are predefined, but depend on the Squid version. A list of available access lists can be found in the official squid wiki.

- allow / deny: You can either allow or deny the access to the resource represented by the access list.

- acl element(s): You can list one or more acl element here that has to be matched by the traffic in order to activate this access list. If you list more than one acl element they are linked by a logical “AND”.

This means all acl elements listed here have to match at the same time for the.

You can also list an access list multiple times in one configuration file. In that case the all access list statements will be check in order and once one matched the traffic will be allowed.

This means that you can use multiple occurrences in a similar fashion to a logical or. But you have to consider the order the statements are made.

A few examples will help explain:

http_access allow from_local_network from_remote_network http_access deny all

This rule will match when traffic if both the listed acl elements are true. Since the name implies that both elements are src type elements, this configuration might stop any traffic through squid since traffic can’t have more than one source.

http_access allow from_local_network http_access allow from_remote_network http_access deny all

This rule would allow traffic through squid if either from_local_network or from_remote_network is evaluated as true.

http_access deny all http_access allow from_local_network http_access allow from_remote_network

This configuration would prohibit all traffic through squid since the rules are checked in order. While it is a good idea to have a deny all rule for http_acces in your configuration, make sure that it is the last http_access rule.

Write your personal Squid proxy configuration from scratch

Now that you know the basics, it is time that you get your hands dirty.

First you have to tell squid what interface and port it should listen on. I usually let it listen on the internal network adapter for my network:

http_port 192.168.1.10:3128

There are also some basic rules in the default configuration of Squid that you should employ:

acl localhost src 127.0.0.1/32 ::1 acl to_localhost dst 127.0.0.0/8 0.0.0.0/32 ::1 acl localnet src 192.168.80.0/24 acl SSL_ports port 443 acl Safe_ports port 80 # http acl Safe_ports port 21 # ftp acl Safe_ports port 443 # https acl Safe_ports port 70 # gopher acl Safe_ports port 210 # wais acl Safe_ports port 1025-65535 # unregistered ports acl Safe_ports port 280 # http-mgmt acl Safe_ports port 488 # gss-http acl Safe_ports port 591 # filemaker acl Safe_ports port 777 # multiling http acl Safe_ports port 901 # SWAT acl CONNECT method CONNECT http_access deny !Safe_ports http_access deny CONNECT !SSL_ports

This configuration prevents users from connecting to unusual ports. Especially if this proxy is for more than you and your family, this will help you limit traffic to sane destinations.

Since you want to pretend to be in another country, you should disable the x-forwarded for header.

Otherwise Squid will always tell the servers you are contacting that it is acting as a proxy:

forwarded_for delete

Next you should probably tell Squid who is allowed to access the proxy. The simplest form of doing that is by using an src type acl element:

acl local_network src 192.168.1.0/24 http_access allow local_network

Need granular user access? Use Active Directory Authentication and Authorization.

If Network based authentication is not enough for you, you can use external authentication helpers with Squid. This part of the configuration might differ slightly based on the operating system and the way your system is joined to the Active Directory.

This configuration works on Debian Jessie joined to the AD through Samba.:

auth_param negotiate program /usr/lib/squid3/negotiate_kerberos_auth auth_param negotiate children 10 auth_param negotiate keep_alive off auth_param basic program /usr/bin/ntlm_auth --helper-protocol=squid-2.5-basic auth_param basic children 10 auth_param basic realm please login to the squid server auth_param basic credentialsttl 2 hours

As you can see there is no acl element configured yet. You can use following configuration to allow any authenticated user access to your proxy:

acl auth_users proxy_auth REQUIRED http_access allow auth_users http_access deny all

But since you have Active directory, you probably want to authorize access based on group membership. For that you will need to define external acls and use helper scripts or programs. But don’t worry everything you need ships with the default Squid install. Authorizing the Active Directory group proxy_users would look something like this:

external_acl_type testForGroup %LOGIN /usr/lib/squid3/ext_wbinfo_group_acl -K acl trusted_users external testForGroup proxy_users http_access allow trusted_users http_access deny all

Basically you have to create an external acl that calls the helper program with the correct parameters. Once that is done you can call this external acl as normal acl of the type external. In the acl statement you pass the name of the external acl and the value which it should check as parameters.

Are websites always loading to slow?

Squid is a very good web cache if you are on a high latency or limited Volume internet connection you might want to take advantage of squids capabilities in this regard.

Caching will speed up the loading of websites, because resources such as pictures or static websites will be delivered by your squid server itself instead of getting them again through the internet.

Depending on how large your cache is going to be it might increase the hardware requirements of squid quite a bit.

This is really just the absolute basic configuration that you need to get squid working as web cache. So if you have time do some research and make it even better:

cache_mem 512 MB maximum_object_size_in_memory 1 MB cache_dir aufs /var/spool/squid3 2048 16 256 maximum_object_size 10 MB

Let’s see what each option does:

- cache_mem: defines how large the RAM cache of squid should be.

- maximum_object_size_in_memory: defines how large an object can be to be cached in RAM. The default is 512KB

- cache_dir: used to configure a cache directory on the disk. The default is not to have a disk cache.

- maximum_object_size: defines the maximum size of any object in the disk cache. The default is 4MB

The cache_dir directive has the following syntax:

- cache_dir: calls the directive

- cache directory type: I chose aufs because it starts a separate thread for the cache access. If you would use ufs which does not start the separate thread, your disk i/o from the cache operation could block the main Squid thread.

- /path/to/cache/directory: point it to where ever the cache should be saved on the disk. Make sure that the squid process has read and write access to that folder

- options: in this case there are 3 options:

- Size of the disk cache in mega bytes

- number of sub folders on the 1st level of the cache directory. The default is 16.

- number of sub folders on the 2nd level of the cache directory. The default is 256.

If you followed the tutorial so far you should have a Squid configuration that covers the basics. So it’s time to get to the important part.

Two ways to avoid geoblocking with Squid

You have options to route traffic selectively through other public interfaces with Squid:

- Use a Squid cache peer

- Use a different interface on the Squid host. This may also require you to set up policy based routing.

I personally use combination of both options. I have a squid server running in a virtual machine that is connected to my network via VPN. This is also the implementation I recommend to you.

The first option is easier to troubleshoot, since cache hierarchies are a fairly common and well documented use of Squid. While the VPN and source based routing of the 2nd option add another layer layer of complexity that you may not think of immediately when troubleshooting.

In addition you can use the Virtual Machine Squid is running on for other purposes as well.

But if you have an existing contract with a VPN provider, that you can not easily get out of, or if you need multiple destinations then the second option might be the one for you.

How to mask your origin with the help of a Squid cache peer

First you need a Server in the country your traffic should be routed to. Personally I use vultr (affiliate link). Their Virtual Servers are quite fast for the price. Another very reputable option is Digital Ocean. Both of these services have a pay as you go model and bill the server by the minute, so they are also great for just testing some stuff and then killing the machine again.

If you want a possibly cheaper option you can scour sites like lowendbox.com and lowendtalk.com for good deals. I recommend at least taking a KVM virtual machine as they are more flexible then their OpenVZ counterparts.

Once you have your virtual server running, install Squid on it. Then set up a minimal configuration for squid:

http_port 192.168.2.11:3128 forwarded_for delete acl peer_host src 192.168.1.10/32 http_access allow peer_host

The interface used in the example is an internal interface that is only accessible via site to site VPN. You could also run Squid on the public interface, but I advise strongly against it.

If you do however run it on a public interface, you need to take more measures to secure Squid and you should encrypt the traffic between both squid servers.

Once you have the configuration in place you should start Squid on your remote server:

service squid3 restart

Once squid is running on the remote machine it’s time to turn your attention back to your local Squid server.

On your local server: Add the new Squid proxy as cache peer:

cache_peer 192.168.2.11 parent 3128 0 default

This is a basic version of the cache peer directive, but for our purpose it is enough. The following options are used:

- cache_peer: calls the directive

- remote host: IP address or fully qualified domain name of the host

- relation: this is either parent or sibling. Squid will always try to send traffic through a parent host (as long as acls permit it). Siblings on the other hand are only checked if they already have a copy of the required object and if not squid will go through a parent or direct connection.

- http port: the port you set the other squid to listen on

- icp port: the Internet Cache Protocol is a way in which Dquid servers can request and exchange cache objects. It is necessary in more complex hierarchies, but for this setup it is not needed.

- options: You can use this to influence failover and load balancing in larger caches. The default is just fine for this use case.

Since you don’t want to route all your traffic through the cache peer, you need to set up two acls to tell squid which traffic should go through which cache peer. For each domain your should set up a pair of acl directives just like this example:

acl domain_to_remote_proxy dstdomain .whatismyip.com acl ref_to_remote_proxy referer_regex [^.]*\.whatismyip\.com.* # redirect cbs acl domain_to_remote_proxy dstdomain .cbs.com acl ref_to_remote_proxy referer_regex [^.]*\.cbs\.com.*

The first acl entry is needed to reroute traffic that is going directly to the target domain. The second entry reroutes all traffic that has the target domain as its referer. This is needed because some content providers might host their actual content on different domains and in that case the referer would be the domain you want to reroute.

As you can see that each subsequent entry to the list has the same name again. This makes writing the actual access lists easier:

cache_peer_access 192.168.2.11 allow domain_to_remote_proxy cache_peer_access 192.168.2.11 allow ref_to_remote_proxy cache_peer_access deny all never_direct allow domain_to_remote_proxy never_direct allow ref_to_remote_proxy

The cache peer directives allow all traffic matching the acls to access the cache parents, but deny any other traffic access to it. In effect they reroute only the desired traffic.

The never_direct setting is a matter of taste. The setting in the example prevents Squid to directly connect to these domains. This means if the cache peer is unreachable squid won’t be able to connect to those domains.

I recommend that you leave those in at least while you are testing the system. This way it is at least be immediately apparent when something doesn’t work.

Now just restart squid and enjoy your unblocked content:

service squid3 restart

How to mask your origin by changing the TCP outgoing address

In order to make use of this you will have to connect either the squid host or your firewall to your remote VPN location.

If you connect the squid host directly to the VPN, then you will need to use policy based routing to route all traffic, that gets out through the VPN network adapter through the VPN. Without it the traffic you sent out on the VPN adapter via squid will still go out through your default gateway. This quick introduction to policy based routing should get you started.

If you terminate your VPN on your firewall, you can just give two network adapters in your network to squid. and then reroute all traffic from one adapter through the VPN with firewall rules.

In either case the squid configuration will be the same. Once you set up your VPN connection in the most suitable way for you, you can start writing the acls that will be used to redirect some of your traffic.

The acl elements themselves are actually the same as in the example for using a squid cache peer. They will simply be applied to another access list.

# redirect whatismyip.com for testing only acl domain_to_remote_proxy dstdomain .whatismyip.com acl ref_to_remote_proxy referer_regex [^.]*\.whatismyip\.com.* # redirect cbs acl domain_to_remote_proxy dstdomain .cbs.com acl ref_to_remote_proxy referer_regex [^.]*\.cbs\.com.*

The first acl entry is needed to reroute traffic that is going directly to the target domain. The second entry reroutes all traffic that has the target domain as its referer. This is needed because some content providers might host their actual content on different domains and in that case the referer would be the domain you want to reroute.

Now that you have the acls ready, you can use them on access lists:

tcp_outgoing_address 192.168.3.1 domain_to_remote_proxy tcp_outgoing_address 192.168.3.1 ref_to_remote_proxy

This configuration sends all traffic that matches the acls through the interface with the IP 192.168.3.1. This IP should obviously be replaced by the IP of your VPN.

Now just restart squid and enjoy your unblocked content:

service squid3 restart

Why stop here?

Your squid server is running and you have accomplished your goal. If you get bored again or if you want to improve your experience while browsing you can look at other things that can be done with squid.

- Block Ads

- Protect your privacy. For example by stripping the referer from http requests or by randomizing the user agent header.

- Fine tune the caching to conserve bandwidth

- Make squid a transparent proxy for your network so that all http and https traffic goes through automatically

- Block content from employees or kids

Great article,

I might add that if you are intending to perform content filtering, that you should check us out. There is a demand for a better blacklist. And with few alternatives available, we intend to fill that gap.

Signed,

Benjamin E. Nichols

http://www.squidblacklist.org

Hey man, following your guide and its awesome, im running squid on a ubuntu box and trying to setup a vpn adaptor but struggling with the policy based routing. any help would be appreciated, slamming my head on the desk right now

I have not used this setup for about a year or so but what is the problem?

i just dont understand how to setup the routing tables for the vpn adaptor, whats the best way to contact you?

Probably E-Mail. May mail is: my nickname at mydomain.net

I am not sure how quick i will be able to respond I leave for a business trip to India on the weekend.

how would you adapt this to do https as well?